Cloud Data Warehouse Optimization

Introduction

According to the State of FinOps 2025 Report, workload optimization and waste reduction remains the top priority for FinOps practitioners, with 50% of respondents consistently prioritizing optimization efforts. Cloud Data Warehouses—including BigQuery (GCP), Amazon Redshift (AWS), Azure Synapse Analytics (Azure), and Snowflake—represent a critical component of the modern Data Cloud and account for significant cloud costs for many large enterprises. Unlike compute and container optimization, which are well-established practices, cloud data optimization requires specialized knowledge and technical planning to achieve cost reductions of 20-50%. This white paper is designed for key FinOps personas who drive cloud cost optimization initiatives and make decisions about Data Cloud investments.

This white paper provides comprehensive guidance for implementing workload optimization strategies for Data Cloud, based on extensive real-world experience in query cost optimization. Implementing these optimization techniques can reduce cloud data warehouse costs by 30-50% while improving cost visibility and operational efficiency.

Cloud Data Warehouse Services in the Data Cloud

The table below provides an overview of major Cloud Data Warehouse platforms within the Data Cloud ecosystem:

| Cloud Provider | Data Warehouse Service | Query Sku Name | Billing Unit | Billing Description |

|---|---|---|---|---|

| Google Cloud | BigQuery | BigQuery Analysis SKU | TiB processed (on-demand) or slot-hours (capacity) | Covers all query-related costs including SQL queries, user-defined functions, scripts, and DML/DDL statements |

| AWS | Amazon Redshift | Redshift Compute | Node-hours (provisioned) or RPU-hours (serverless) | Billing for compute resources in Redshift clusters. Provisioned clusters billed per node-hour based on instance type, while serverless uses Redshift Processing Units (RPUs) with 60-second minimum billing increments |

| Azure | Azure Synapse Analytics | Azure Synapse Analytics | TB processed (serverless) or DWU-hours (dedicated) | Serverless SQL pools charge per TB of data processed at $5/TB, while dedicated SQL pools use Data Warehouse Units (DWUs) billed per hour |

| Oracle Cloud | Autonomous Data Warehouse | Oracle Autonomous Data Warehouse–ECPU | ECPU-hours | Uses Elastic CPU (ECPU) billing metric with minimum 2 ECPUs required |

| Snowflake | Snowflake Data Cloud | Snowflake Credits | Credit-hours | Universal billing unit for all compute resources including virtual warehouses, serverless features, and cloud services |

Measures of Success & Key Performance Indicators

Effective measurement and cost allocation requires continuous monitoring of specific metrics that demonstrate optimization progress and support FinOps decision-making. These metrics should consistently trend downward over time as optimization strategies and cost management initiatives take effect.

| Metric | Description |

|---|---|

| Total Queries per Day | Total count of all queries executed daily across the data warehouse environment |

| Total Query Cost per Day | The cumulative financial cost of all query operations executed within a 24-hour period, supporting cost visibility and chargeback models |

| Average Query Cost per Day | Total daily query cost divided by total daily query count, providing insight into per-query efficiency and cost per unit of work |

| Average Query Duration | Measurement of query execution time, enabling identification of resource-intensive queries that contribute to increased costs |

Cloud Data Warehouse Optimization Best Practices

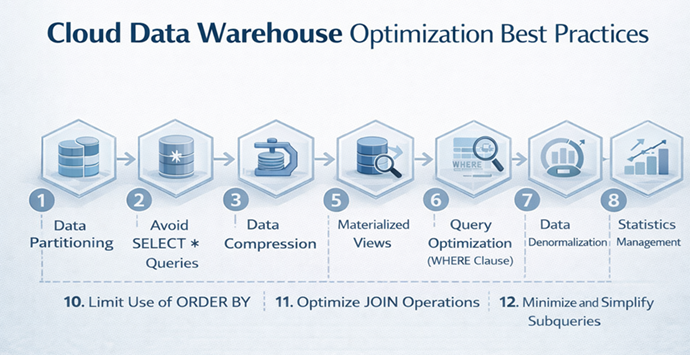

Large enterprises execute tens of thousands of queries monthly within their Data Cloud infrastructure, with total costs potentially reaching tens of millions of dollars per month due to inefficient query and storage design. The following universal optimization techniques apply across all Cloud Data Warehouse platforms and can achieve significant cost reductions while improving workload optimization maturity.

- Data Partitioning

Partition tables by commonly used filter columns to ensure queries access only relevant data subsets. This strategy dramatically reduces data scanning volumes, lowering compute costs and improving performance. BigQuery recommends partitioning over sharding to eliminate table management overhead, while Redshift and Synapse benefit from range, hash, and list partitioning strategies.

Synapse tables can be partitioned on date or key columns and combined with hash-distribution to ensure each node scans only its relevant data slice. Snowflake automatically partitions data into micro-partitions (typically 50–500 MB each) and stores min/max metadata for each partition. Implementing partition strategies aligned with common query filters (date, region, category) enables queries to read only a fraction of total data, establishing the foundation for efficient querying across all Cloud Data Warehouse platforms and reducing cost significantly.

2. Avoid SELECT * Queries

Selecting only necessary columns is universally critical in columnar storage systems. Since all Cloud Data Warehouse platforms utilize columnar storage, this practice directly reduces data processing and scanning, leading to significant cost savings. Limiting column selection reduces data reading and processing requirements, resulting in faster query execution and lower resource usage. In Snowflake, Redshift, and Synapse, unnecessary columns increase the data volume each node must read and transfer, degrading query performance. This straightforward best practice—selecting only required columns—not only reduces costs and runtime but also prevents errors by avoiding ambiguous or irrelevant data.

- Data Compression

Implementing appropriate compression algorithms reduces both storage costs and I/O operations across all platforms within your Data Cloud strategy. Redshift offers Zstandard compression achieving up to 65% disk space reduction. Compressed data requires less storage space and reduced I/O operations, significantly cutting costs and improving query speed. Amazon Redshift allows specifying column encodings or uses automatic compression. Azure Synapse (Dedicated SQL Pool) uses compressed columnstore indexes by default for large tables, yielding 3–10x compression. Google BigQuery automatically compresses data in its Capacitor columnar format, eliminating manual encoding requirements. Snowflake automatically compresses all table data and selects efficient algorithms per column, supporting cost optimization efforts.

- Clustering and Indexing

Creating indexes or clustering keys on frequently queried columns improves query performance and reduces scan time universally. In traditional databases, indexes serve this purpose; in cloud columnar warehouses, clustering or sort keys determine physical data order and enable data skipping. Oracle ADW uses automatic indexing through machine learning, while other platforms require manual configuration. Data clustering and ordering represents a high-impact technique that allows Data Warehouses to skip irrelevant data efficiently. Across all platforms, ensuring large tables are organized on disk by fields most relevant to queries (dates, keys, etc.) can achieve 20–90% performance improvements by skipping substantial data blocks in typical analytical workloads.

- Materialized Views

Utilizing materialized views provides a powerful mechanism for accelerating repetitive, complex queries by precomputing expensive operations and reusing results. Materialized views function as cached summary tables where query definitions (often including joins and aggregations) are stored with their results. BigQuery materialized views improve performance while reducing costs through decreased data scanning. All five Cloud Data Warehouse platforms support materialized views. Materialized views offer low-maintenance approaches to trading storage for dramatic performance gains, making them essential cost and performance optimization tools across BigQuery, Redshift, Synapse, and Snowflake within the Data Cloud ecosystem.

- Query Optimization (WHERE Clause)

Writing efficient WHERE clauses is essential for minimizing data processing and supporting cost visibility initiatives. Even with partitioning and clustering, poorly structured filters or unnecessary conditions can increase computational work. Applying highly selective filters (those removing the most rows) as early as possible represents a critical best practice. BigQuery assumes users provide optimal expression ordering and does not reorder automatically. Using filters intelligently—ordering conditions optimally, pushing filters deep into query plans, and avoiding constructs that prevent indexes or partition pruning—reduces CPU and I/O work, creating leaner and faster queries that support chargeback accuracy.

- Data Denormalization

Reducing joins through denormalization of frequently accessed data trades storage for compute efficiency. This approach is highly effective in Cloud Data Warehouses where storage costs are relatively low compared to compute costs. Cloud Data Warehouses often perform better with denormalized schemas (fewer, wider tables) compared to highly normalized schemas with many small tables. Denormalization—pre-joining or flattening data into consolidated tables—can reduce join costs and improve query speed by 25–50% in practice. Denormalized tables outperform star schemas on most queries, with significant average query time improvements in Redshift and Snowflake, and BigQuery. Well-designed denormalized tables or materialized views can bypass costly join operations, significantly speeding user queries and reducing compute costs within your Data Cloud.

- Statistics Management

Database query optimizers rely on up-to-date statistics – summaries of table data (such as row counts and how values are distributed) – to estimate the cost of different execution plans. When these statistics accurately reflect the current data, the optimizer can compare plan costs correctly and pick the fastest, most efficient plan. If the statistics are stale or missing, however, the optimizer’s estimates become unreliable and it may choose a suboptimal plan, dramatically slowing down query execution. In general, keeping statistics current ensures the optimizer has the right information (row counts, histograms, etc.) to correctly assess join orders and index usage, which leads to better performance, more efficient use of resources, and reduced compute cost—key objectives of workload optimization.

- Workload Management

Workload Management (WLM) involves system configuration to prevent resource contention between heavy and light queries while maximizing available compute utilization. WLM reduces costs by ensuring efficient resource allocation across queries, preventing expensive idle time and contention, and enabling features like auto-scaling, workload isolation, and prioritization. Configuring query queues and resource allocation prevents resource contention and supports cost allocation models. WLM allows priority assignment to different query types, managing how queries use resources through resource queues or priorities. Effective workload management prevents heavy queries from monopolizing resources, ensuring smooth performance across all workloads while optimizing spend.

- Limit Use of ORDER BY

Using ORDER BY only when necessary, especially in subqueries, reduces sorting overhead and unnecessary compute consumption. It is recommended to avoid ORDER BY in intermediate query steps. Judicious use of ORDER BY can significantly improve performance since compute nodes no longer perform costly merge-sort operations. This also conserves memory, preventing disk spills that can degrade performance for other queries and increase cloud costs.

- Optimize JOIN Operations

Optimizing joins is crucial because they are often the most resource-intensive part of SQL queries in Cloud Data Warehouses. To reduce processing time and cost, it’s important to minimize data movement by aligning tables on common join keys and structuring joins thoughtfully—such as placing the largest table first. Using techniques like collocated joins, broadcasting small tables, and pre-aggregating data before joins can significantly cut down on overhead. Avoiding unnecessary outer joins and ensuring clear join conditions also improves efficiency. These strategies apply across all platforms and can lead to major performance improvements and cost savings within your Data Cloud strategy.

- Minimize and Simplify Subqueries

Minimizing or rewriting subqueries—especially correlated or duplicated ones—into joins, window functions, or common table expressions helps reduce query repetition and improves parallelism, significantly lowering compute resource usage. This approach not only accelerates performance but also reduces Cloud Data Warehouse costs by avoiding inefficient nested operations and unnecessary data scans, supporting overall cost management objectives.

Conclusion

Implementing Cloud Data Warehouse optimization strategies with a FinOps mindset helps organizations manage costs more effectively while maintaining strong performance. By applying key workload optimization techniques across the Data Cloud, businesses can lower their cloud data warehouse costs by 20–50% and improve query performance by 30–50%. The twelve best practices outlined in this paper support cost visibility, efficient resource use, and reduced waste—core pillars of FinOps practice.

When paired with FinOps capabilities like Policy & Governance, Cost Allocation & Chargeback, Anomaly Management, and Reporting, these workload optimization strategies create a strong foundation for ongoing cost control and Data Cloud governance. Together, they ensure cloud data warehouse investments and Data Cloud infrastructure deliver maximum value.

How Finitizer can help

Finitizer provides an intelligent analysis engine purpose-built for Cloud Data Warehouse cost optimization, with deep support for Google BigQuery workload management. The platform analyzes query patterns, slot utilization, and billing data to generate actionable recommendations across four key optimization dimensions. Query Cost Optimizer identifies expensive queries and SQL anti-patterns—such as SELECT * statements, missing WHERE clauses, ORDER BY without LIMIT, CROSS JOINs, and missing partition filters—enabling teams to prioritize high-impact query rewrites that typically yield 30-70% cost reductions. Daily cost trend monitoring with 7-day and 30-day moving averages provides visibility into spending patterns and anomaly detection. Slot Management capabilities include health scoring for reservations, utilization monitoring against 85% targets, and queue time analysis to balance cost efficiency with query performance.

Capacity Tuning recommendations guide baseline and auto scale slot adjustments based on percentile-based usage patterns (p50-p75 for baseline, p95-p97 for maximum). Peak Hour Analysis identifies temporal bottlenecks and suggests workload rescheduling strategies to distribute demand more evenly. Slot Commitment Planning analyzes current usage to recommend optimal commitment levels, comparing pay-as-you-go, 1-year, and 3-year commitment options with blended savings calculations.

Beyond analysis, Finitizer delivers practical tooling that accelerates implementation and supports FinOps governance. The platform provides step-by-step implementation guides with copy-ready bq and gcloud commands, exportable CSV and PDF reports for stakeholder communication, and direct links to relevant Google Cloud documentation. Project-level Spill & Waste Analysis evaluates memory efficiency, execution performance, and resource utilization to identify projects requiring optimization attention. Each recommendation is prioritized by potential savings impact and includes monthly and annual savings estimates, enabling organizations to focus resources on the highest-value opportunities first. This combination of intelligent query analysis, slot management optimization, and commitment planning enables organizations to achieve 20-50% Data Cloud cost reductions while maintaining query performance and building the organizational capabilities required for continuous workload optimization.